America’s tech sector is stepping into a new phase of the AI race with Nvidia’s latest hardware, and the gap with China is about to widen in ways that matter to households, jobs and national power.

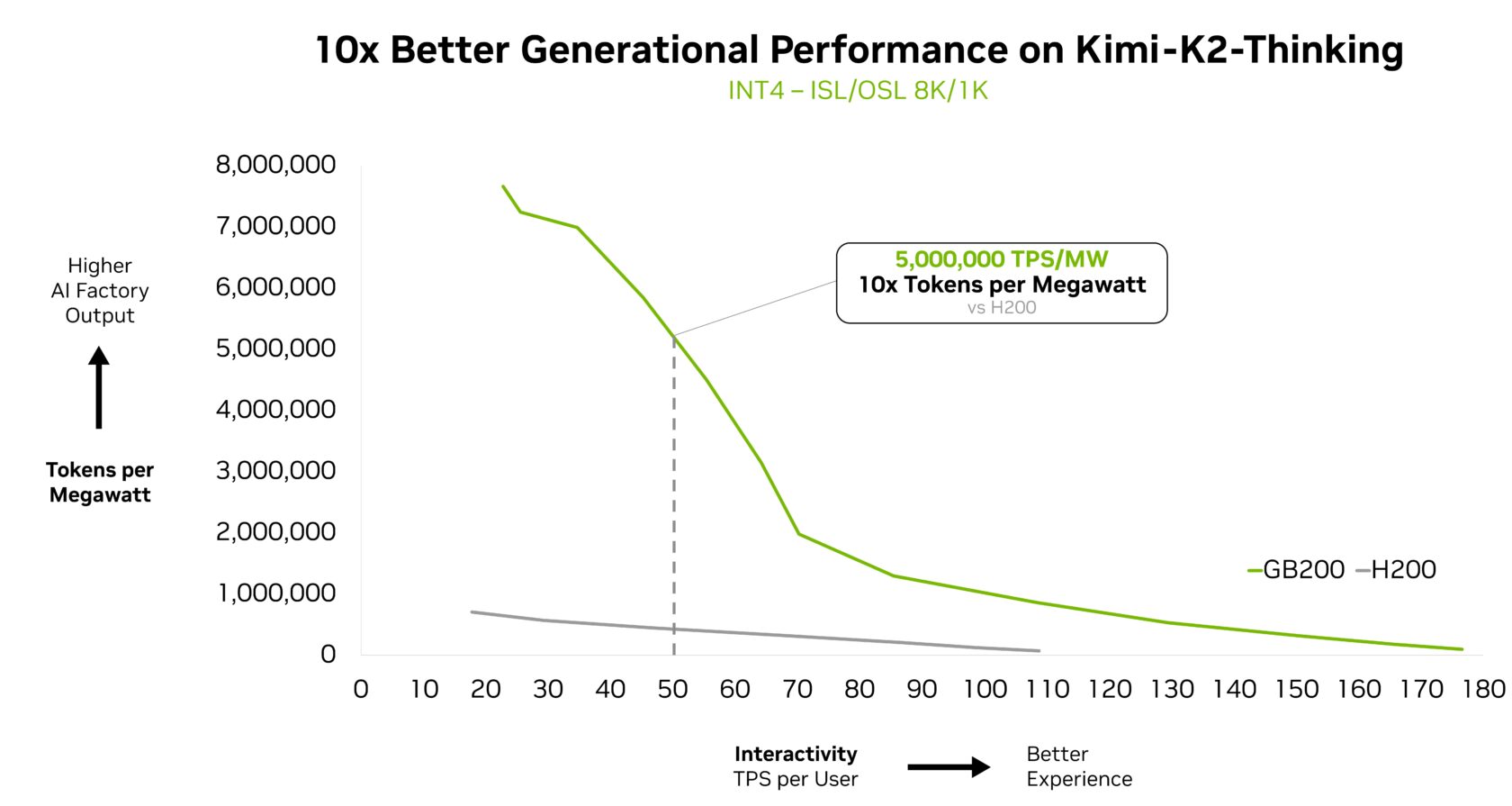

In a new update, Nvidia unveils GB200 NVL72, a breakthrough hardware platform that can make the smartest artificial intelligence models run up to ten times faster while using far less energy.

The system marks a turning point as GB200 NVL72 “combines hardware and software optimizations for maximum performance and efficiency, making it practical and straightforward to scale MoE models.”

MoE, or mixture of experts, works like the human brain, activating only the small group of specialists needed for each task instead of forcing a model to fire every neuron every time. Nvidia says this shift delivers a huge leap in efficiency and performance.

The most valuable company in the world notes that the top open-source models in the world are already using MoE, and if paired with GB200 NVL72, many of those models will witness a massive surge in performance. Specifically, Nvidia notes that Chinese models Kimi K2 Thinking and DeepSeek R1 will see a 10x performance leap.

But the breakthrough comes at a time when the United States has placed strict export controls on advanced chips and interconnect systems. China cannot access Blackwell, NVLink Switch or the rack scale designs needed to deliver ten times faster performance. That leaves a large gap between what American companies can build and what China can legally acquire.

Meanwhile, American firms are now getting their hands on Nvidia’s new MoE hardware.

“To bring this performance to enterprises worldwide, the GB200 NVL72 is being deployed by major cloud service providers and NVIDIA Cloud Partners, including Amazon Web Services, Core42, CoreWeave, Crusoe, Google Cloud, Lambda, Microsoft Azure, Nebius, Nscale, Oracle Cloud Infrastructure, Together AI and others.”

Disclaimer: Opinions expressed at CapitalAI Daily are not investment advice. Investors should do their own due diligence before making any decisions involving securities, cryptocurrencies, or digital assets. Your transfers and trades are at your own risk, and any losses you may incur are your responsibility. CapitalAI Daily does not recommend the buying or selling of any assets, nor is CapitalAI Daily an investment advisor. See our Editorial Standards and Terms of Use.