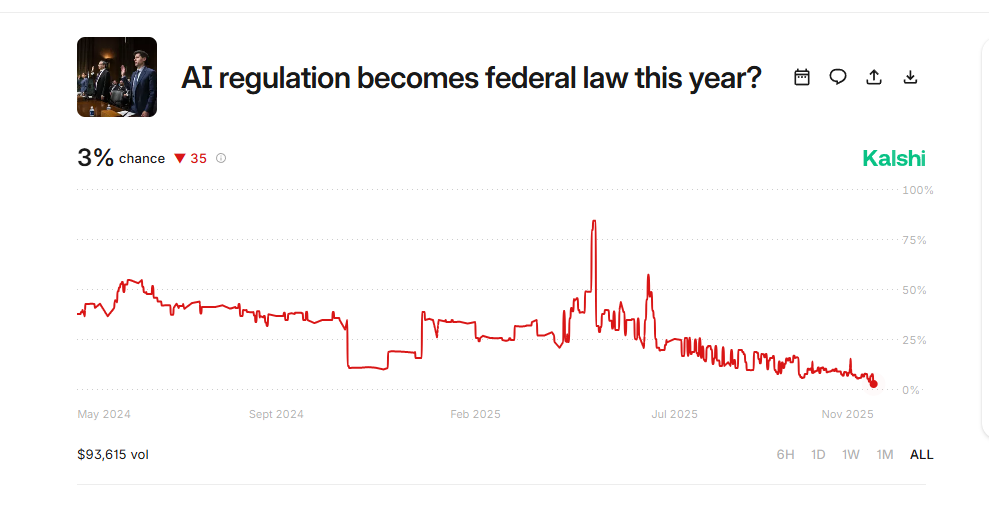

New data from prediction markets signals that the US government will fall short of enacting AI regulation this year.

Data from Kalshi shows that the odds for AI regulation becoming a law this year have plummeted to 3% after climbing to as high as 84.6% in May of this year.

Amid the falling odds, traders can buy a “Yes” contract for as low as $0.06, while those taking the other side of the bet will have to shell out $0.96 per contract. So far, the market has accumulated $93,616 in bets.

“If a bill becomes law regulating large language models (for example, banning them, limiting how they can be trained, or limiting how they can be used) by Dec 31, 2025, then the market resolves to Yes. Outcome verified from Library of Congress.”

In May, US President Donald Trump signed into law the Take It Down Act, which criminalizes the non-consensual sharing of intimate images and deepfakes, and requires online platforms to establish a notice-and-takedown process for such content.

But the Kalshi market did not resolve to “Yes” upon the signing of the law, as the bill only covers the publication of digital forgeries.

“This does not regulate the creation, training, use, or export of large language models. A bill banning the creation of such images using large language models would be an example of a bill that would resolve the market to Yes.”

The news comes as AI experts ring the alarm on model safety issues. In a recent interview, OpenAI co-founder Ilya Sutskever warned that AI models will become more visibly powerful, triggering governments and the public to take action.

In September, the U.S. Federal Trade Commission (FTC) opened a probe over the handling of data involving the use of AI chatbots. The regulator wanted to see how companies measure and monitor negative impacts, process user inputs, generate outputs, and use information gleaned from chats, with a special focus on child safety.

Disclaimer: Opinions expressed at CapitalAI Daily are not investment advice. Investors should do their own due diligence before making any decisions involving securities, cryptocurrencies, or digital assets. Your transfers and trades are at your own risk, and any losses you may incur are your responsibility. CapitalAI Daily does not recommend the buying or selling of any assets, nor is CapitalAI Daily an investment advisor. See our Editorial Standards and Terms of Use.