Criminals are now moving from stealing passwords to targeting the digital brains of personal AI assistants, according to a cybersecurity firm.

Hudson Rock says it has identified a real-world infection in which an infostealer malware program successfully exfiltrated a victim’s OpenClaw configuration files.

The company describes the incident as a turning point, marking a shift from stealing browser logins to harvesting what it calls the “souls” of AI agents.

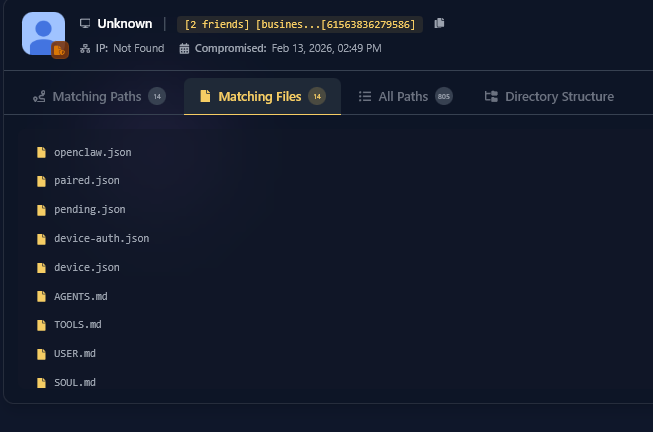

According to Hudson Rock, the malware was not specifically designed to target OpenClaw. Instead, it used a broad file-grabbing routine that scans infected machines for sensitive file types and folder names. In this case, it swept up an entire OpenClaw workspace by identifying directories such as .openclaw.

The firm says the malware grabbed several key files that act like the control center and memory bank of the victim’s AI assistant, including openclaw.json and device.json. The files contain special access tokens and digital signatures that could allow the assistant to connect to online services, impersonate devices, bypass security checks and access protected data.

Hudson Rock adds that the malware also stole files that store the AI’s long-term memory, indicating that the attackers may have captured what amounts to a blueprint of the user’s digital life, including behavioral rules, stored memories and internal notes.

The cybersecurity firm warns that while the infection used a general file-sweeping method, future malware may be built specifically to target AI assistants.

“This case is a stark reminder that infostealers are no longer just looking for your bank login. They are looking for your context. By stealing OpenClaw files, an attacker does not just get a password; they get a mirror of the victim’s life, a set of cryptographic keys to their local machine, and a session token to their most advanced AI models.

As AI agents move from experimental toys to daily essentials, the incentive for malware authors to build specialized ‘AI-stealer’ modules will only grow.”

Disclaimer: Opinions expressed at CapitalAI Daily are not investment advice. Investors should do their own due diligence before making any decisions involving securities, cryptocurrencies, or digital assets. Your transfers and trades are at your own risk, and any losses you may incur are your responsibility. CapitalAI Daily does not recommend the buying or selling of any assets, nor is CapitalAI Daily an investment advisor. See our Editorial Standards and Terms of Use.